Multi-Scale Temporal Pattern Learning for Transformer-Based Time Series Forecasting

Enhancing PatchTST with selective multi-scale patching to capture rich temporal dynamics

Project Overview

// The Problem

Transformer-based time series models such as PatchTST rely on a single, fixed patch size to tokenize input sequences. While effective, this design limits the model’s ability to capture the diverse temporal patterns present in real-world time series, where short-term fluctuations, medium-term dependencies, and long-term trends often coexist. Selecting an optimal patch size is also highly sensitive and dataset-dependent.

// The Solution

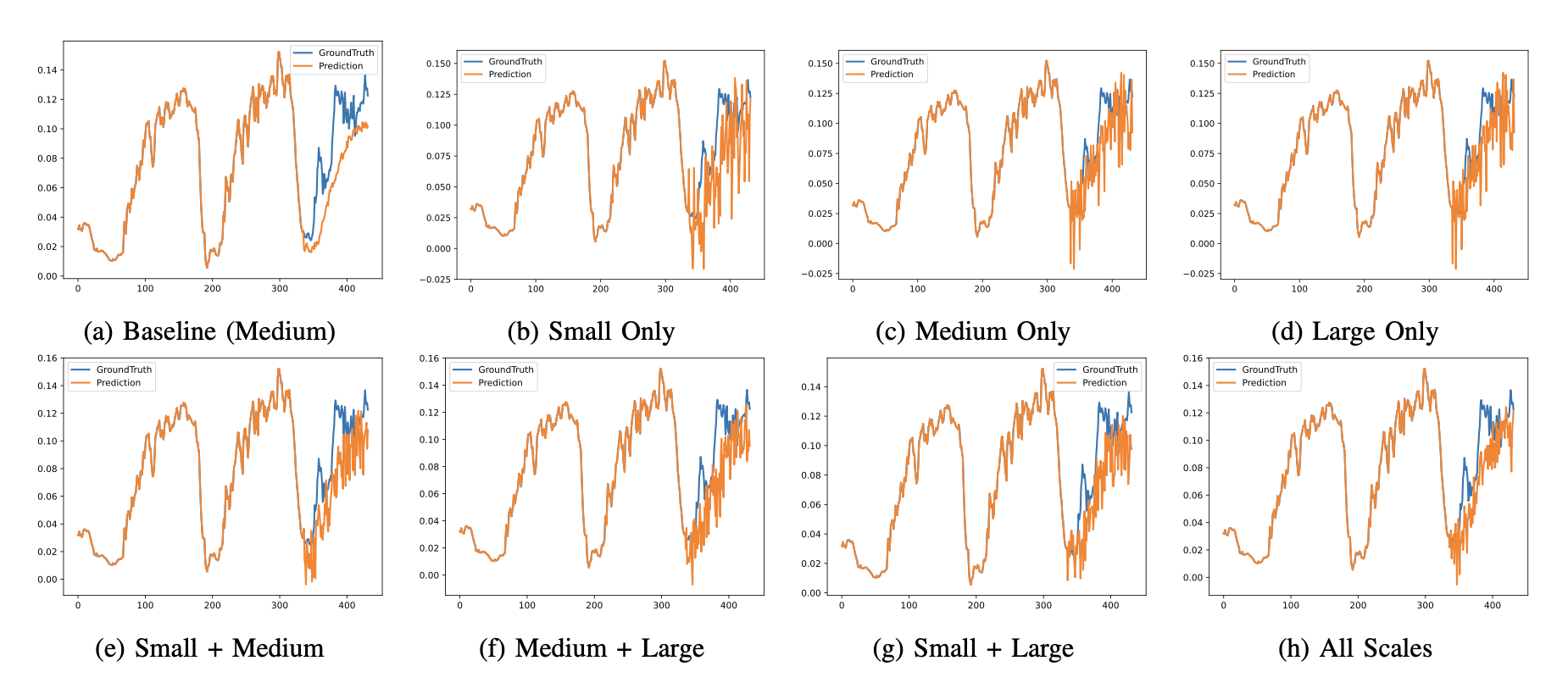

We propose Selective Multi-Scale PatchTST (MS-PatchTST), a novel architecture that processes time series inputs simultaneously across multiple patch sizes. Each temporal scale is handled by an independent PatchTST backbone, and their forecasts are combined using a learned fusion layer that dynamically weights each scale’s contribution. This selective design allows practitioners to balance forecasting accuracy and computational cost by choosing the most relevant set of scales.

// The Impact

The proposed approach improves long-term forecasting accuracy by 5–15% over the original PatchTST on benchmark datasets such as Weather and Electricity, while reducing sensitivity to patch-size hyperparameters. The model provides a flexible and extensible framework for multi-scale temporal reasoning in Transformer-based forecasting systems.

Parallel multi-scale PatchTST backbones with independent patch sizes, followed by a learned fusion layer for final forecasting.

Tech Stack

Key Features

- Parallel multi-scale patch-based Transformer backbones

- Learned fusion mechanism for dynamic scale weighting

- Selective configuration to trade off accuracy and computation

- Improved robustness to patch-size hyperparameter choices

- Compatible with standard long-term forecasting benchmarks