BrainForge - Deep Learning for EEG Source Localization

Solving the EEG Inverse Problem with deep learning methods for enhanced brain activity localization

Project Overview

// The Problem

Electroencephalography (EEG) records electrical signals from the scalp, but identifying the true brain regions that generate these signals, known as the EEG inverse problem, is fundamentally ill-posed. Traditional mathematical models suffer from low spatial resolution, sensitivity to noise, and poor deep-brain localization, while existing deep learning approaches require massive synthetic datasets and struggle to generalize across patients and electrode setups.

// The Solution

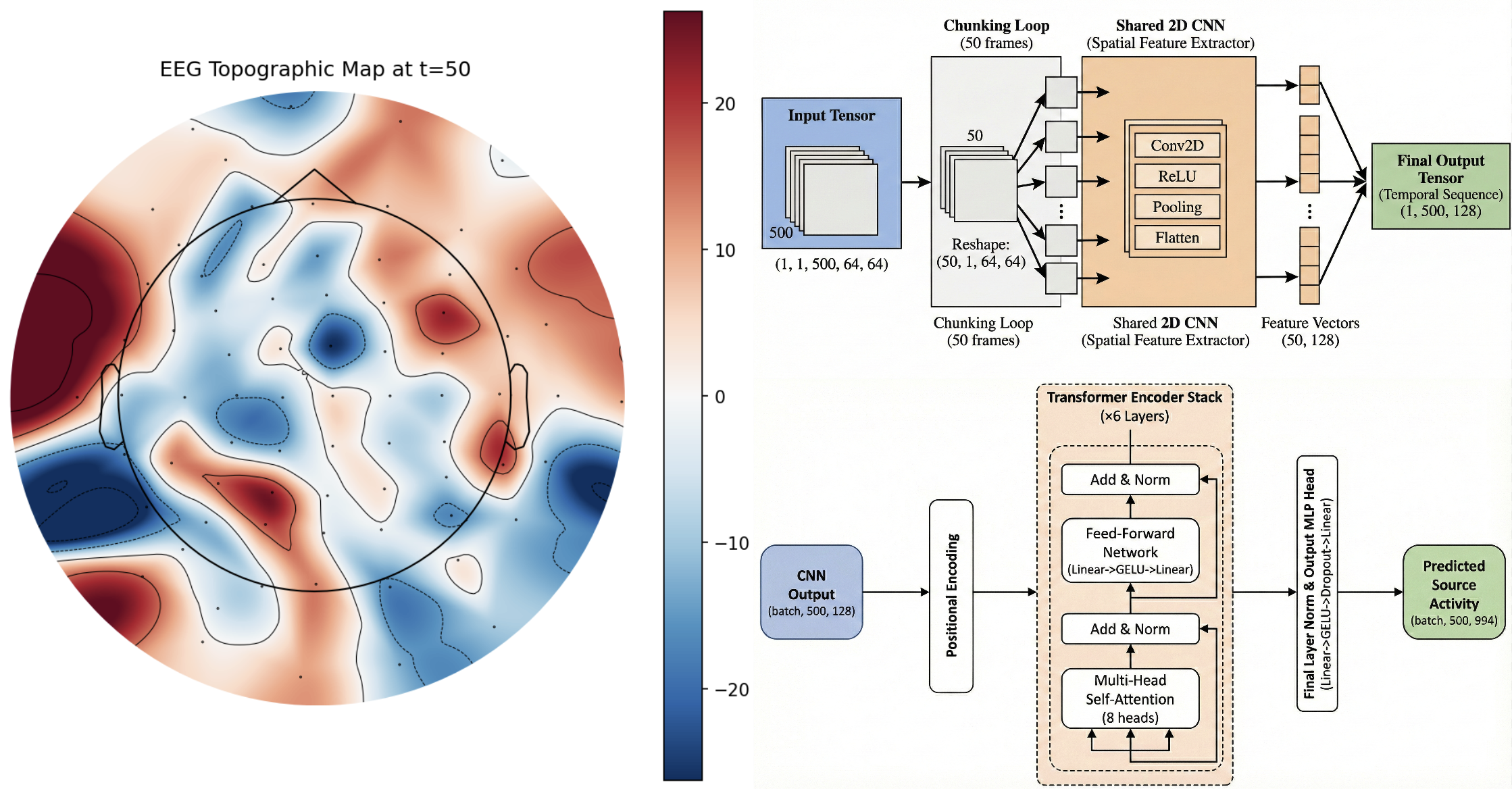

We propose a novel end-to-end framework that combines optimized synthetic EEG data generation with a hybrid CNN-Transformer neural network. EEG signals are first converted into 2D scalp topography maps, processed by a CNN-based spatial module to extract spatial features, and then passed through a Transformer-based temporal module to model time-dependent brain dynamics. This allows the model to learn rich spatio-temporal representations of neural activity without relying on slow, recurrent architectures.

// The Impact

The proposed system is designed to significantly reduce synthetic data storage requirements (from ~4 TB to ~20 GB) and simulation time (from ~6000 hours to ~500 hours) while improving localization accuracy, robustness to noise, and the ability to identify multiple simultaneous brain sources, including deep-brain structures such as the thalamus and hippocampus.

Two-stage deep learning pipeline with CNN-based spatial feature extraction and Transformer-based temporal modeling.

Tech Stack

Key Features

- Hybrid CNN-Transformer architecture for spatio-temporal EEG source imaging

- Efficient synthetic EEG data generation pipeline

- Support for multiple simultaneous neural source activations

- Reduced dependency on large leadfield-specific datasets

- Improved robustness to noise and electrode configurations